15 Best AI Coding Assistant Tools In 2026

TL;DR

- In 2026, there isn’t one “best” AI coding assistant. There are different tools optimized for different parts of the development lifecycle, and most teams mix them without a clear framework.

- Editor assistants like GitHub Copilot, JetBrains AI, Tabnine, Gemini Code Assist, and Amazon Q help generate functions, tests, and configurations while you write code.

- Repository-level agents like Cursor, Claude Code, Aider, and Devin handle multi-file refactors, debugging loops, and scoped task execution across a codebase.

- Security scanners (Snyk Code), browser-based app builders (Replit, Bolt, Lovable), and AI code review platforms like Qodo focus on what happens before merge, validating pull requests with context-aware analysis, enforcing standards, and minimizing code review risk at scale.

In 2026, the question isn’t whether to use AI in your development workflow. It’s which tools to use, for what, and how to combine them without losing your mind.

Every week, there’s a new assistant, a new agent, a new “10x developer” promise. And most engineers I know are either under-tooled, still relying on one assistant for everything, or over-tooled, overwhelmed by overlapping capabilities with no clear mental model of what goes where.

I’ve been leading engineering teams through this exact transition. Testing tools, running them in dev workflows, figuring out where AI increases development velocity and where it quietly introduces the kind of failures that show up three PRs later. The answer is never “use more AI.” It’s using the right AI for each layer of your stack.

That’s what this guide is.

I’ve grouped and evaluated the tools that are important in 2026: IDE assistants, terminal agents, app builders, and review platforms, based on hands-on testing across real engineering scenarios. For each one, you’ll see what it’s genuinely built for, where it performs, and where it breaks down, so you don’t have to find out the hard way.

By the end, you’ll have a clear framework for building a coherent AI stack: the right tool for each job, no redundancy, no blind spots.

Best AI Coding Assistant Tools

- Qodo

- Snyk Code

- Github Copilot

- Cursor

- Windsurf

- Jetbrains

- Tabnine

- Amazon Q Developer

- Gemini Code Assist

- Claude Code

- Aider

- Devin

- Replit

- Bolt

- Lovable

How I Selected the Best AI Coding Tools in this List

Most tools look capable in a pilot. Differences show up after weeks of real use across shared repos, team review cycles, and CI/CD under production pressure.

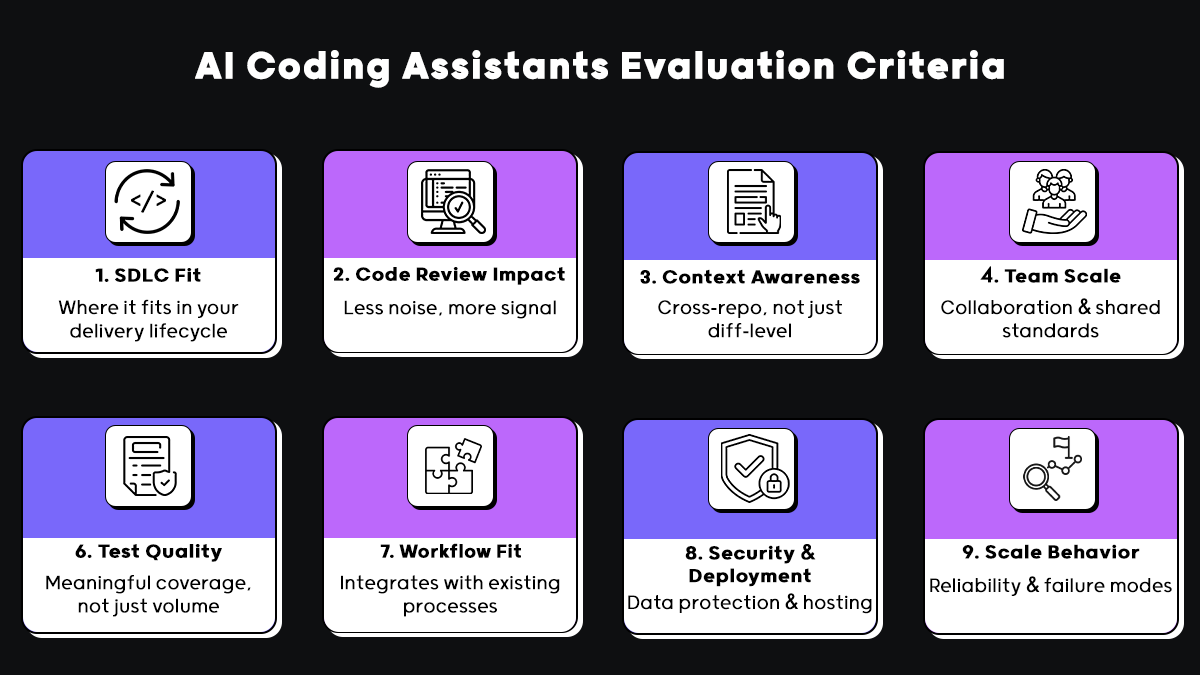

We evaluated each tool on eight criteria that reflect what actually matters at that stage:

- Role in the delivery lifecycle: Does it help at authoring, review, testing, or quality enforcement? Generation alone doesn’t equal quality.

- Impact on code review: Does it reduce reviewer load, or just add more code to review?

- Context depth: File-level context isn’t enough in real codebases. We looked at cross-repo awareness, service boundaries, and historical dependencies.

- Team-scale behavior: Tools that work great solo often break down when shared standards and coordinated workflows enter the picture.

- Test quality, not just quantity: Coverage that doesn’t reflect real execution paths isn’t coverage.

- Workflow integration: Does it fit into how your team already works, or does it require building around it?

- Security and deployment: Data handling, model transparency, and support for self-hosted or regulated environments.

- Behavior at scale: How does it fail? Quietly and unpredictably is worse than clearly and inspectably.

Tools that performed well in pilots but degraded under sustained, team-wide use are scored accordingly. That’s the filter everything in this guide went through.

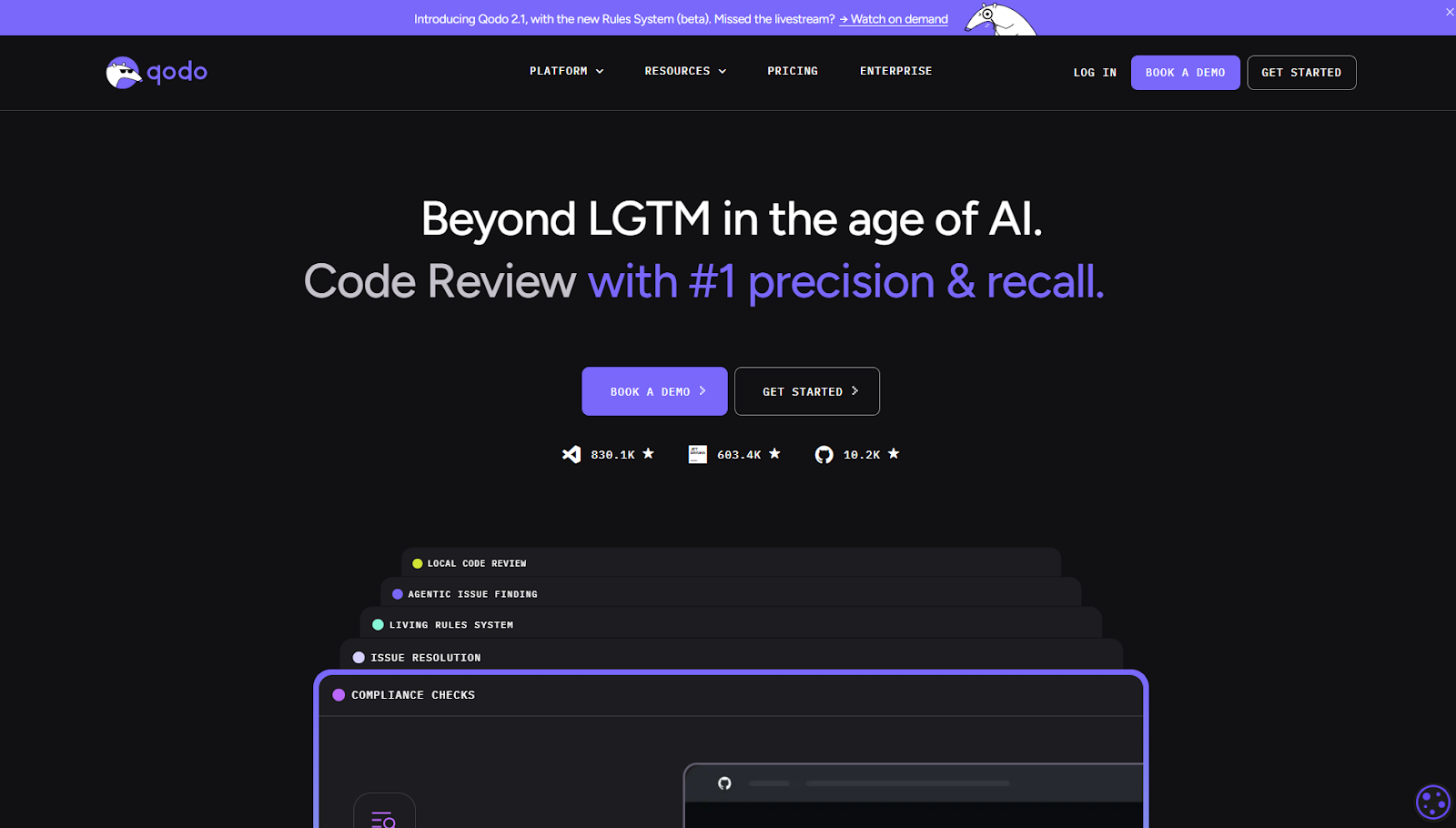

1. Qodo

Qodo is the AI Code Review Platform, the missing quality layer in your AI stack. It sits between “AI wrote it” and “production-ready”, focusing on validating, enforcing, and governing code changes before they are merged.

Best for

- High PR volume teams

- Multi-repo organizations

- CI/CD-integrated code review enforcement

Where Qodo Fits in the Delivery Lifecycle

- IDE Plugin: flags issues before a PR is opened, in IDEs such as VS Code or JetBrains

- Git Plugin: automated review directly in GitHub, GitLab, Bitbucket, or Azure DevOps PRs

- CLI Plugin: build custom review agents and automate quality workflows across your entire SDLC

Not for

- Individual developers looking for inline autocomplete or code generation

- Teams without structured PR workflows or CI/CD pipelines

What Qodo Does

Qodo automates the parts of code review that don’t scale with AI-generated output, so human review effort stays focused on architecture, risk, and decisions that actually require judgment.

- Automated PR review across code, diffs, and tests

- Missing and insufficient test coverage detection

- Organization-wide standards enforcement

- 15+ automated PR workflows, including validation and merge gating

- 1-click resolution for common review findings

- Agentic quality workflows via CLI

- Multi-repo indexing(using RAG indexer), from a handful of repos to large, distributed codebases

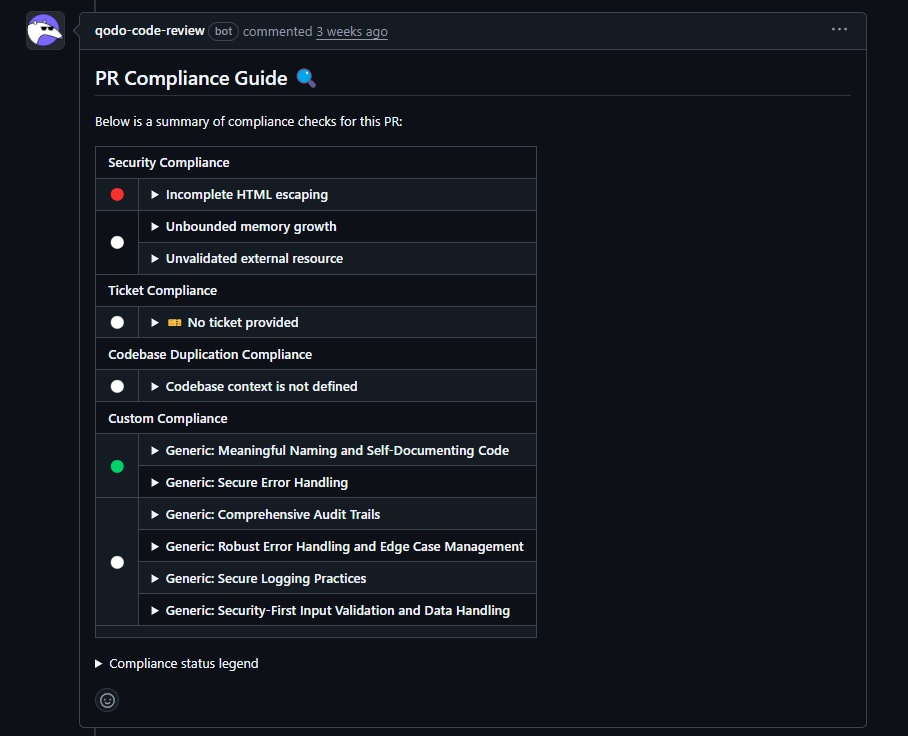

Hands-On: Qodo as a Pre-Merge Compliance Check

I ran Qodo on a GrapesJS pull request, a large open-source web builder with dynamic HTML rendering, CDN usage, and user-supplied content. The kind of codebase where security issues hide.

Instead of inline comments, Qodo posted a single structured PR Compliance Guide as shown in the snapshot below:

Not a line-by-line review, a direct answer to: Is this change ready to merge?

Three signals worth noting:

- Security Compliance flagged incomplete HTML escaping, a real XSS risk, not a theoretical one

- Ticket Compliance flagged a missing linked issue, a process gap, not a code problem

- Checks that couldn’t evaluate were marked unresolved, not skipped. It won’t guess where context is missing.

The result ensures code quality by flagging risks and process failures while the cost of fixing them is still low. It needs upfront configuration. But that’s the point, it’s built to sit between fast AI coding and production-ready code.

Pricing

- Developer: Free (limited PR reviews and monthly credits)

- Teams: ~$30/user/month (higher PR limits, increased credits, collaboration features)

- Enterprise: Custom pricing (multi-repo context, SSO, advanced controls, on-prem/air-gapped options)

Pricing varies based on usage limits and deployment model.

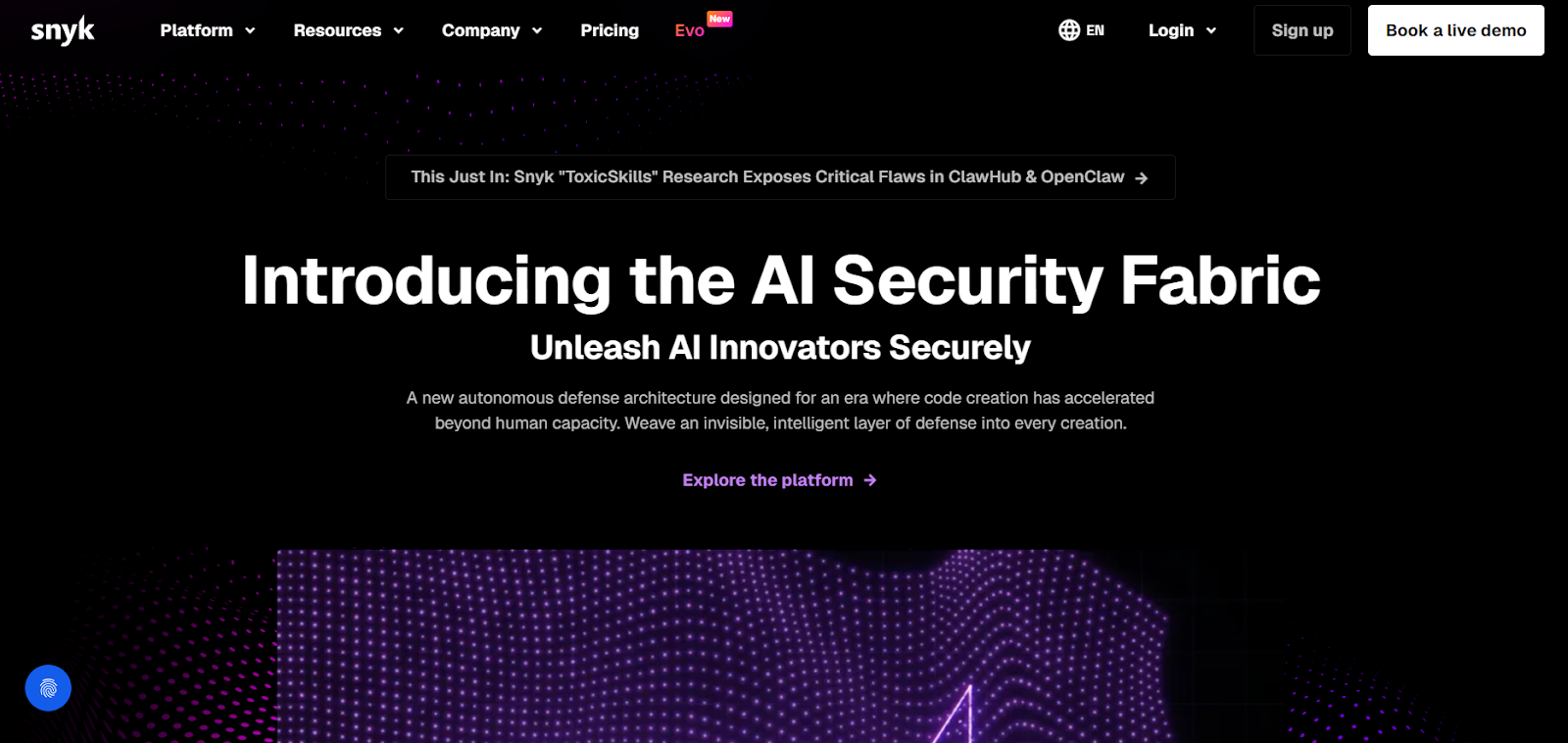

2. Snyk Code

Snyk Code is a SAST (Static Application Security Testing) tool that scans source code to find security vulnerabilities before changes are merged. It is built to detect exploitable issues early in the development cycle and flag them directly inside developer workflows.

Best for

- Teams that need automated security scanning in pull requests

- Organizations already using Snyk for dependency or container security

- CI/CD environments where security checks must run on every build

Where Snyk Code Fits in the Delivery Lifecycle

- IDE Plugin: surfaces security issues while writing code (VS Code, JetBrains, Visual Studio)

- Git Plugin: adds SAST findings directly inside GitHub, GitLab, or Bitbucket pull requests

- CLI: runs security scans in CI/CD pipelines

- Web Dashboard: a central place to track, prioritize, and manage vulnerabilities

Not for

- Teams looking for architectural review or design validation

- Workflows focused on test coverage analysis

- Organizations expecting full code governance or merge-readiness evaluation

What Snyk Code Does

Snyk Code analyzes how data flows through your application to detect vulnerabilities.

- Detects XSS, SQL injection, command injection, and unsafe input handling

- Identifies hardcoded secrets

- Maps findings to CWE categories

- Ranks issues based on exploitability and impact

- Provides remediation guidance for each finding

It is focused specifically on source-code security analysis.

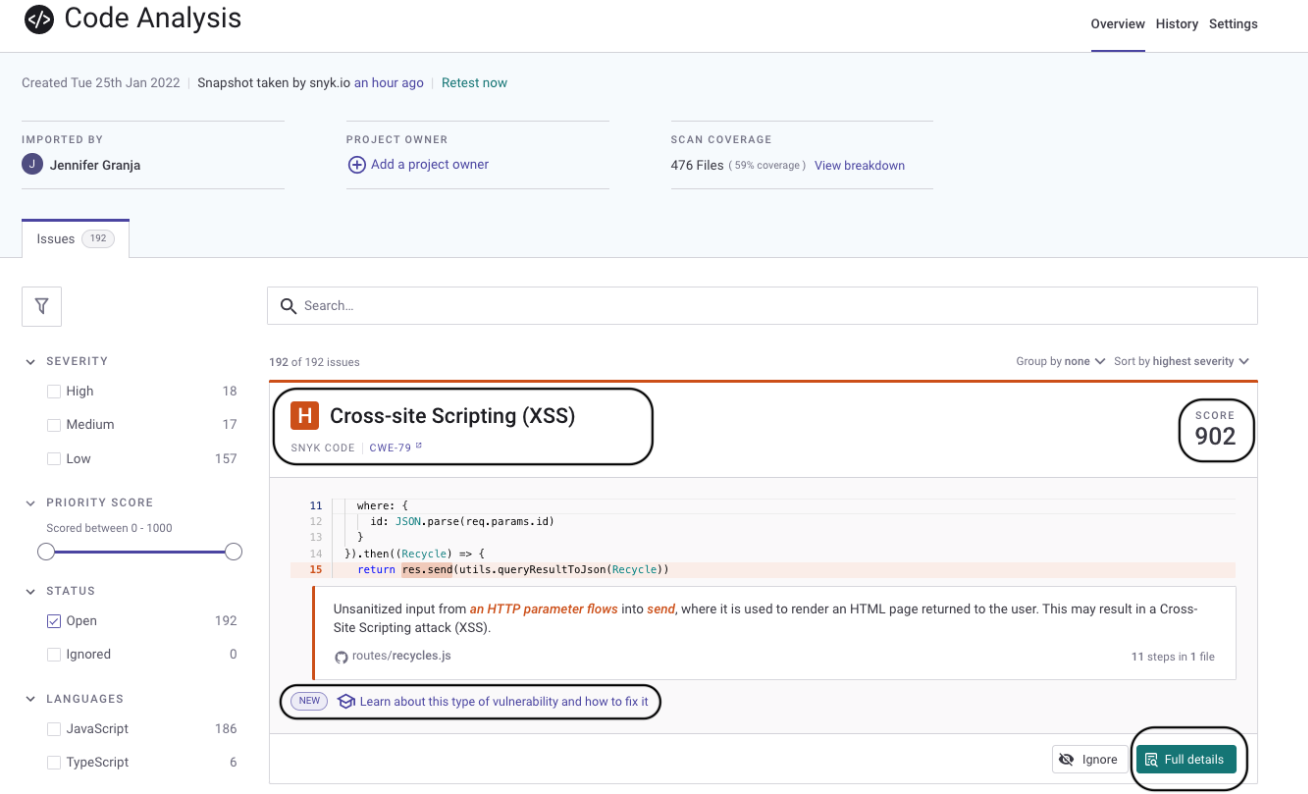

Hands-On: Snyk Code in a Repository Scan

I ran Snyk Code against a JavaScript-heavy repository to evaluate how it behaves in a real development workflow.

The scan reported 192 issues across 476 files. The interface showed that approximately 59% of the repository was analyzed, making coverage visibility explicit:

One high-priority finding was a Cross-Site Scripting vulnerability (CWE-79). Snyk traced user input from:

req.params.id

into:

res.send()

The report displayed the full data flow path from the source of the input to the execution point where it became unsafe. The finding included a priority score and remediation guidance.

Based on the above example, Snyk Code functions as an automated SAST check embedded into pull requests and CI pipelines. It detects vulnerabilities but does not evaluate architecture or overall code quality.

Pricing

- Developer (Free): Limited usage for individuals and small projects

- Team / Pro: Paid plans with higher scan limits, CI integration, and reporting

- Enterprise: Custom pricing with governance, compliance features, and support

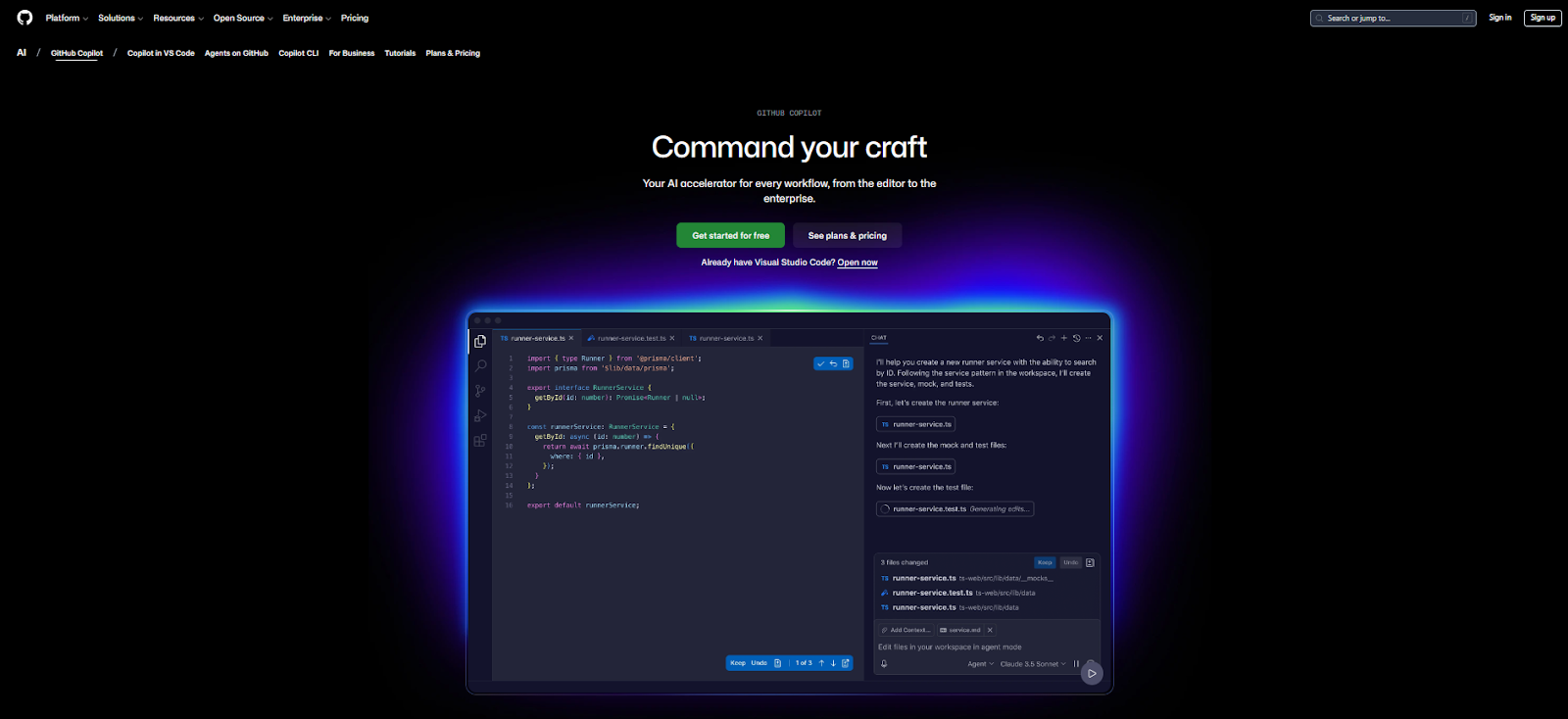

3. GitHub Copilot

GitHub Copilot is an AI coding assistant that works inside your editor. It generates code from comments and surrounding context, including full functions, tests, and configuration files. It reduces time spent writing boilerplate and repetitive logic, but it does not review or validate the code it produces.

Best for

- Developers who want help writing code faster inside the IDE

- Teams are minimizing manual generation and avoiding repetitive patterns

- Organizations adopting AI with minimal workflow changes

Where GitHub Copilot Fits in the Delivery Lifecycle

- IDE Integration: inline suggestions in VS Code, JetBrains, and Visual Studio

- Editor Chat: explain code, generate snippets, or refactor inside the editor

- Autocomplete Engine: predicts and completes blocks of code as you type

Copilot works during code creation. It does not act on pull requests or block merges.

Not for

- Teams expecting automated PR review or merge enforcement

- Workflows requiring architectural validation

- Organizations needing compliance or CI-level quality gates

What GitHub Copilot Does

Copilot uses the current file and nearby context to generate code suggestions.

- Generates full functions and method implementations

- Completes loops, conditionals, and common patterns

- Helps write tests and configuration files

- Explains or rewrites code inside the editor

- Supports many languages and frameworks

It improves authoring speed but does not check whether the code meets system-wide constraints.

Hands-On: Generating Terraform with Copilot

I tested Copilot inside Visual Studio Code to scaffold a Terraform configuration.

Prompt:

Create Terraform config for a Google Cloud Storage bucket named logs with versioning enabled.

Copilot generated:

provider "google" {

project = var.project_id

region = var.region

}

resource "google_storage_bucket" "logs" {

name = "logs"

location = var.region

versioning {

enabled = true

}

}

The configuration was syntactically valid. After setting project_id, it could be applied with terraform init and terraform apply.

Copilot handled the scaffolding correctly. It did not check naming conventions, IAM policies, or compliance requirements.

Pricing

- Free: $0/month (basic GitHub features with limited usage)

- Team: ~$4/user/month (collaboration and access controls)

- Enterprise Cloud: ~$21/user/month (security, SSO, governance features)

- Enterprise Server: Custom pricing (self-hosted deployment)

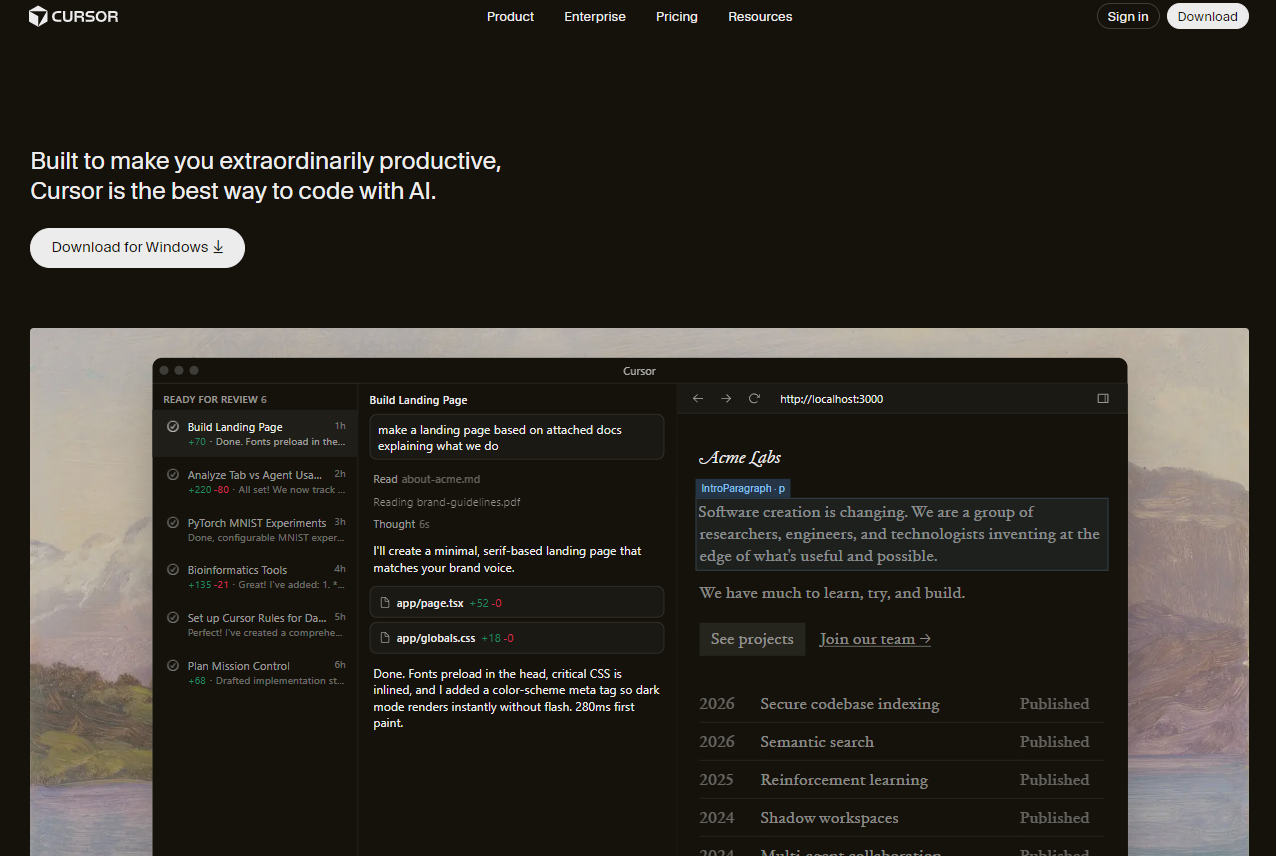

4. Cursor

Cursor is a code editor with built-in AI capabilities. It lets you issue natural language commands inside the editor to generate, edit, or refactor code across multiple files. Beyond simple autocomplete tools, Cursor can apply structured changes to existing code within a repository.

Best for

- Developers working in large or unfamiliar codebases

- Teams doing frequent refactors

- Engineers who want AI assistance beyond single-file autocomplete

Where Cursor Fits in the Delivery Lifecycle

- Editor-Level AI: chat and inline editing inside the editor

- Repository Context: reads multiple files within the current project

- Agent Mode: proposes multi-file edits based on instructions

Cursor operates during code authoring. It does not review pull requests or block merges.

Not for

- Teams are required to use a specific corporate IDE

- Organizations expecting PR enforcement or CI/CD gating

- Fully air-gapped environments without additional deployment work

What Cursor Does

Cursor analyzes the current repository and applies code changes based on instructions.

- Generates new functions and updates existing ones

- Modifies code across multiple files

- Explains how specific parts of the codebase work

- Suggests structured refactors

- Maintains session context while iterating on changes

It focuses on editing and refactoring code inside the repository.

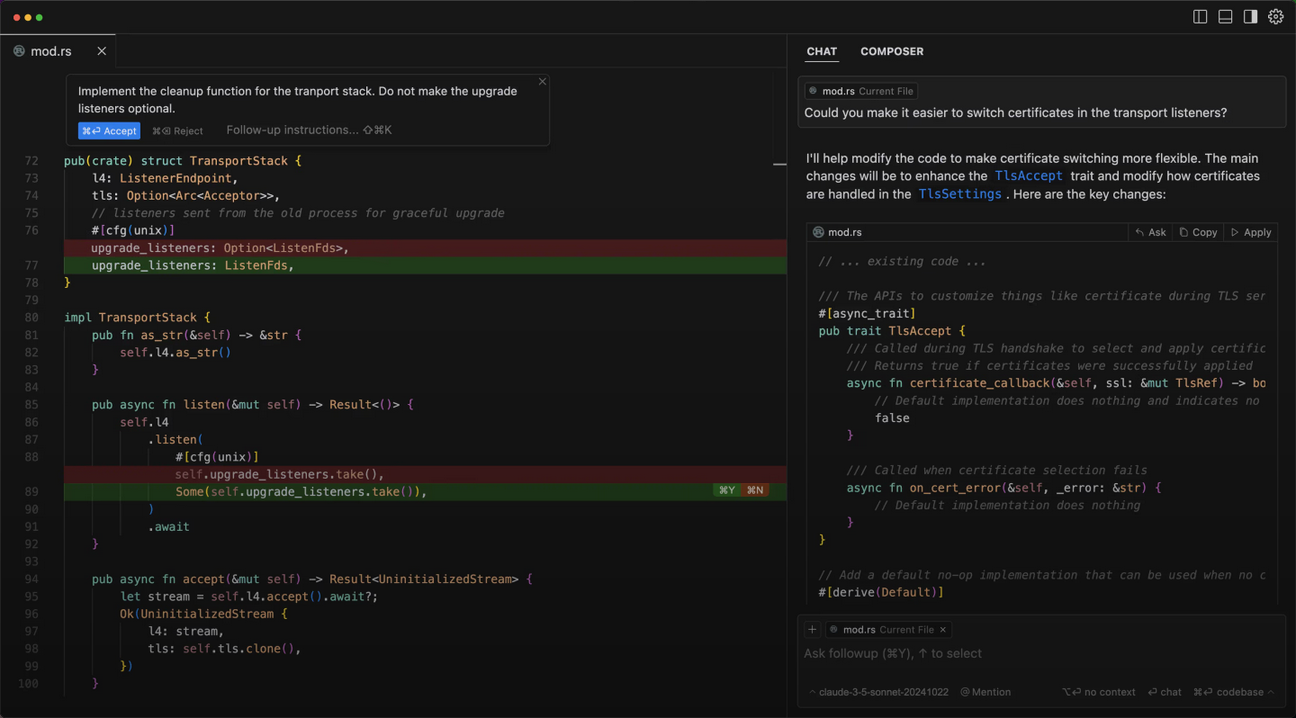

Hands-On: Refactoring a Rust Codebase

I tested Cursor in a Rust repository while modifying transport-layer logic. Inside the editor, I gave an instruction to implement a cleanup function while preserving upgrade listener behavior.

Cursor analyzed the file and proposed inline edits. It displayed added and removed lines using standard diff markers. The changes updated existing traits and configuration handling without rewriting unrelated sections.

The edits were not shown as a detached snippet. They were applied directly to the relevant parts of the file, and I could accept or reject them.

While testing:

- It handled multi-line edits within existing logic

- It respected the constraints given in the prompt

- It maintained context across follow-up instructions

The changes still required normal testing and review. Cursor assists with repository-level editing but does not validate system-wide impact.

Pricing

- Pro: $20/user/month (expanded usage limits and premium model access)

- Pro+ / Ultra: Higher-tier plans with larger usage limits

- Teams: $40/user/month (shared workspaces, admin controls, SSO support)

- Enterprise: Custom pricing (org-level controls, compliance features, priority support)

5. Windsurf

Windsurf is an AI-native code editor with built-in AI capabilities. It includes an integrated AI panel called Cascade for chat, code generation, and multi-file edits inside the editor. It focuses on helping developers modify and refactor code directly within a project.

Best for

- Developers who want in-editor chat and multi-file edits

- Teams willing to adopt a new editor for deeper AI integration

- Projects that involve structural refactorings across files

Where Windsurf Fits in the Delivery Lifecycle

- Editor-Level AI: chat and code edits inside the editor

- Cascade Panel: central place for prompts and guided edits

- Multi-File Editing: applies structured changes across files

Windsurf operates during code authoring. Pull request review and CI enforcement happen separately.

Not for

- Teams are required to use a specific corporate IDE

- Organizations expecting PR enforcement or CI/CD gating

- Environments that cannot introduce a new editor

What Windsurf Does

Windsurf analyzes the current repository and applies edits based on instructions.

- Generates new files or updates existing ones

- Applies multi-file refactors

- Shows a preview of changes before applying them

- Supports SSH and dev container workflows

- Allows importing settings from VS Code or Cursor

It improves editing and refactoring inside a single repository.

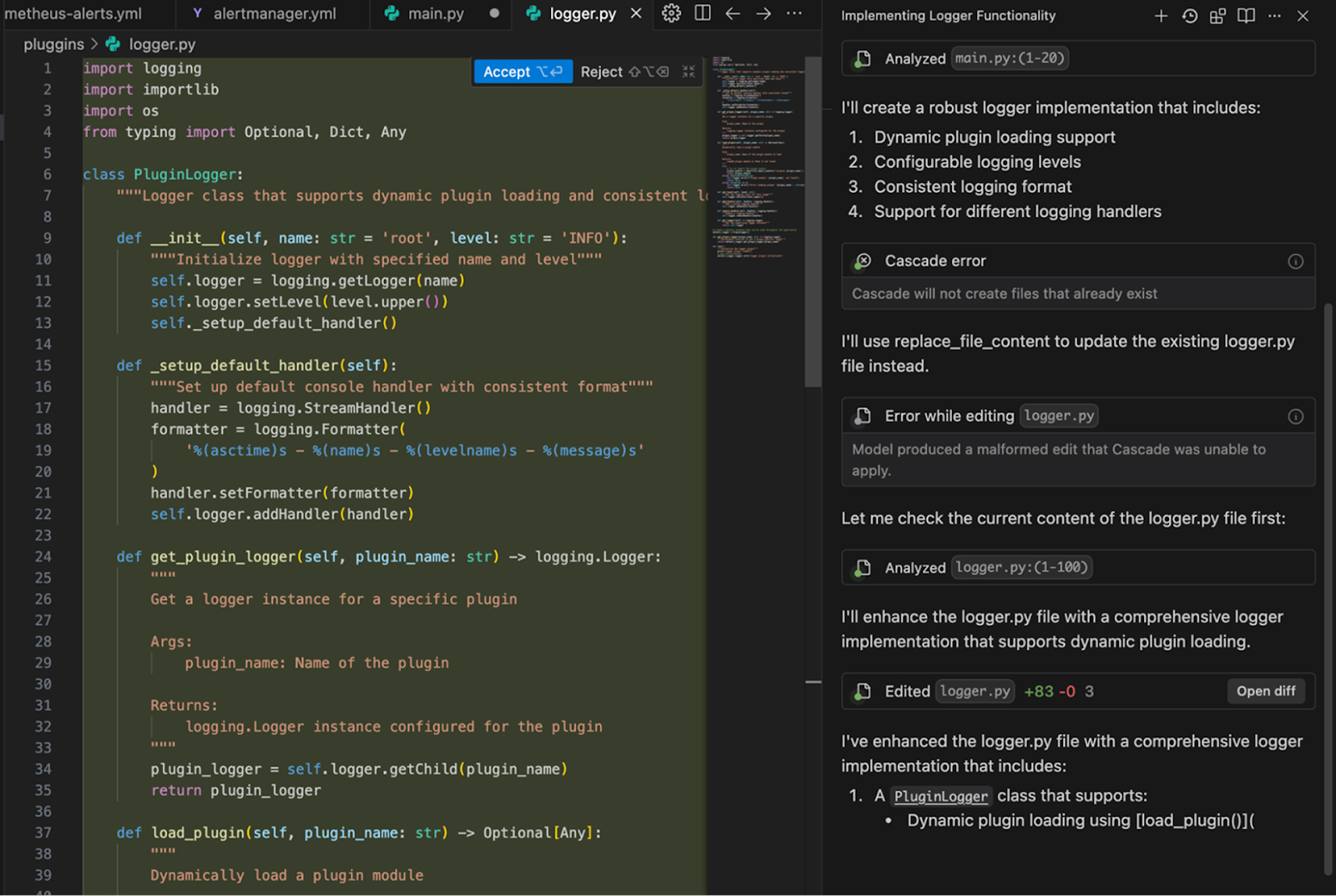

Hands-On: Refactoring Logging in a Python Project

I used Windsurf in a Python project to replace scattered logging calls with a centralized PluginLogger class. From the Cascade panel, I described the change in plain language. Windsurf analyzed logger.py and proposed inline edits with added and removed lines clearly marked.

It generated a reusable logger structure like this:

class PluginLogger:

def __init__(self, name, level:’INFO’):

self.logger = logging.getLogger(name)

self.logger.setLevel(level)

When an earlier edit attempt failed, Cascade displayed the error and regenerated the patch instead of applying a broken change.

After accepting the update, I ran the test suite and reviewed the diff. Windsurf reduced the time needed to restructure the code, but validation still required normal testing and review.

Pricing

- Free: $0/month (25 prompt credits, unlimited completions)

- Pro: $15/user/month (500 prompt credits, premium models)

- Teams: $30/user/month (admin controls, shared billing, usage insights)

- Enterprise: Custom pricing (SSO, RBAC, higher limits, advanced controls)

6. JetBrains AI

JetBrains AI adds AI features directly inside JetBrains IDEs such as IntelliJ IDEA, PyCharm, and WebStorm. It builds on the IDE’s existing project indexing, inspections, and refactoring tools. It assists while writing and modifying code, but does not replace review or CI processes.

Best for

- Teams are already standardized on JetBrains IDEs

- Developers who rely on JetBrains inspections and refactor tools

- Organizations that want AI features without switching editors

Where JetBrains AI Fits in the Delivery Lifecycle

- IDE Integration: AI suggestions inside IntelliJ, PyCharm, WebStorm

- Inline Assistance: code generation, explanations, test creation

- Task Support: structured multi-step assistance inside the IDE

JetBrains AI operates during code authoring. Pull request review and CI enforcement remain separate.

Not for

- Teams looking for an AI-first editor with autonomous multi-file agents

- Organizations expecting IDE-level governance or merge gating

- Multi-repo enforcement or cross-org policy validation

What JetBrains AI Does

JetBrains AI works alongside the IDE’s language intelligence.

- Generates code and test cases

- Explains existing code using project context

- Suggests follow-up edits after a change

- Uses IDE indexing for better symbol and type awareness

- Works with built-in inspections and refactor tools

It improves implementation speed inside a single project.

Pricing

- IntelliJ IDEA Ultimate: ~ $719/user/year (commercial license)

- All-Products Pack: ~ $709–$960/user/year depending on plan

- Toolbox Subscription: subscription management across IDEs

- JetBrains AI Add-ons: optional AI plans (AI Pro, AI Ultimate) priced annually per user

JetBrains licenses are subscription-based, with a perpetual fallback license after 12 months of subscription.

7. Tabnine

Tabnine is an AI code assistant that runs inside existing IDEs. It focuses on inline code completions and predictable suggestions, with strong privacy and deployment controls. It is designed for teams that want AI assistance without changing editors or workflows.

Best for

- Organizations with strict data privacy or compliance requirements

- Teams that want AI completions inside their current IDE

- Enterprises needing admin controls and flexible deployment options

Where Tabnine Fits in the Delivery Lifecycle

- IDE Plugin: inline completions while typing

- Function Suggestions: generates full functions from context

- Lightweight Chat: small tasks such as documentation or schema generation

Tabnine operates during code authoring. It does not participate in PR review or CI/CD enforcement.

Not for

- Teams looking for multi-file autonomous edits

- Workflows centered on agent-style automation

- Cross-repository reasoning or governance enforcement

What Tabnine Does

Tabnine analyzes local and project-level context to suggest code.

- Provides real-time inline completions

- Suggests full functions and repetitive patterns

- Adapts to team coding style over time

- Optional chat for small generation tasks

- Supports SaaS, VPC, on-prem, and air-gapped deployments

It focuses on controlled, predictable assistance.

Pricing

- Starter (Free): $0/month (basic inline completions

- Pro: ~$12/user/month (natural-language prompts and IDE chat)

- Enterprise: ~$39/user/month (admin controls, SSO, flexible deployment options)

Enterprise pricing varies based on deployment model and security requirements.

8. Amazon Q Developer

Amazon Q Developer is an AI coding assistant integrated with AWS tools and supported IDEs. It helps developers write and understand code while making sure a strong awareness of AWS services, SDKs, and cloud patterns. It is most useful in projects that run primarily on AWS.

Best for

- Teams building applications on AWS

- Developers working with AWS SDKs, IAM, and cloud infrastructure

- Organizations are already invested in AWS tooling

Where Amazon Q Developer Fits in the Delivery Lifecycle

- IDE Integration: inline suggestions and explanations

- Chat Assistance: guidance on AWS APIs and service usage

- AWS-Aware Code Generation: infrastructure and backend scaffolding

Amazon Q operates during development. It does not review pull requests or enforce CI/CD rules by default.

Not for

- Teams working mainly outside AWS

- Cloud-agnostic development environments

- Organizations expecting governance or merge enforcement

What Amazon Q Developer Does

Amazon Q generates and explains code with AWS context.

- Writes backend services using AWS SDKs

- Explains IAM policies and service integrations

- Helps migrate or modernize code

- Provides cloud-native guidance

- Integrates with AWS authentication and tooling

It is domain-specific to AWS rather than general-purpose across all platforms.

Pricing

- Free Tier: $0/month (limited monthly agent interactions)

- Pro: ~$19/user/month (higher limits, pooled usage, enterprise controls)

Additional usage beyond the included quota is billed based on consumption.

9. Gemini Code Assist

Gemini Code Assist is Google’s AI coding assistant integrated into VS Code, JetBrains IDEs, and Android Studio. It provides inline code suggestions, chat-based help, and deeper integration with Google Cloud services. It is most relevant for teams building on Google Cloud.

Best for

- Teams developing on Google Cloud

- Developers working with BigQuery, Cloud Run, Firebase, or GCP APIs

- Organizations already using Google’s developer ecosystem

Where Gemini Code Assist Fits in the Delivery Lifecycle

- IDE Integration: inline completions and code generation

- Chat Assistance: explain code, generate snippets, or troubleshoot errors

- Cloud-Aware Support: guidance for Google Cloud services and SDKs

It operates during code authoring. It does not review pull requests or enforce CI/CD rules.

Not for

- Teams primarily building on AWS or Azure

- Organizations looking for PR-level enforcement

- Cross-repository governance or architectural validation

What Gemini Code Assist Does

Gemini generates code using the open files and local project context.

- Suggests functions and code blocks

- Generates tests and documentation

- Explains unfamiliar code

- Provides Google Cloud–specific guidance

- Supports conversational refinement inside the editor

Enterprise tiers can use a private repository context to improve suggestions.

Pricing

- Free: Limited usage inside supported IDEs

- Standard: Higher limits and Google Cloud integration features

- Enterprise: Private repo context, enterprise controls, and governance options

Pricing varies based on usage and edition.

10. Claude Code

Claude Code is Anthropic’s terminal-based AI coding tool. It runs as a CLI and can read, edit, and execute code across a repository under developer supervision. It is built for agent-style workflows, where the AI performs multi-step tasks instead of just suggesting lines of code.

Best for

- Engineers are comfortable working from the terminal

- Multi-file refactors and coordinated edits

- Debugging or setup tasks that require edit, run, inspect cycles

Where Claude Code Fits in the Delivery Lifecycle

- CLI Interface: natural-language instructions from the terminal

- Repository Access: reads and edits files directly

- Command Execution: runs scripts or project commands during iteration

Claude Code operates during development and refactoring. Pull request review and CI validation remain separate.

Not for

- Developers who only want inline autocomplete

- Teams expecting automatic governance or merge enforcement

- Workflows where AI changes must be fully autonomous without supervision

What Claude Code Does

Claude Code coordinates tasks across a repository.

- Opens and modifies multiple files

- Generates configuration and setup code

- Runs project commands

- Shows diffs before applying changes

- Works with Git workflows

It behaves more like an automation tool than a passive assistant.

Hands-On: Setting Up Jest in a TypeScript Repository

Inside a TypeScript project, I ran:

Setup Jest for this repo and configure test matching.

Claude Code scanned the repository and created a jest.config.js file. As shown in the snapshot below:

It generated a configuration using ts-jest, configured the test environment to Node, and included patterns for both .test.ts and .spec.ts. Before writing the file, it showed the full diff and asked for confirmation.

module.exports = {

preset: 'ts-jest',

testEnvironment: 'node',

testMatch: ['**/?(*.)+(spec|test).ts'],

};

After approval, the file was written to the project. I ran the test command manually to verify everything worked. The tool handled setup quickly, but I still reviewed the configuration and adjusted paths to match the repository structure.

Pricing

- Free: Limited usage for individuals

- Pro: ~$20/user/month (higher limits and priority access)

- Max: ~$100–$200/user/month (larger usage capacity)

- Team: ~$25–$30/user/month (shared billing and admin controls)

- Enterprise: Custom pricing (SSO, RBAC, audit logs, higher limits)

11. Aider

Aider is an open-source, terminal-based AI coding tool that works directly with Git. You interact with it from the CLI, and it proposes or applies code changes as tracked diffs inside your repository. It is designed for developers who want AI assistance without leaving the terminal.

Best for

- Developers who work primarily in Git and the CLI

- Teams that prefer reviewing diffs before accepting AI changes

- Open-source or self-hosted environments

Where Aider Fits in the Delivery Lifecycle

- CLI Interface: natural-language prompts in the terminal

- Git Integration: edits files and stages changes as diffs

- Model Flexibility: works with different LLM providers

Aider operates during development. Pull request review and CI validation remain separate.

Not for

- Developers who prefer IDE-native assistance

- Fully autonomous agent workflows

- Teams expecting built-in governance or SaaS management

What Aider Does

Aider reads the current repository state and applies controlled edits.

- Modifies multiple files in one task

- Shows diffs before committing

- Generates commit messages

- Keeps all changes inside Git history

- Can be configured with self-hosted or approved models

It focuses on transparent, reviewable edits rather than automation.

Pricing

Aider is open source and free to use. Costs depend on the language model provider you configure (for example, API usage from OpenAI, Anthropic, or other supported models).

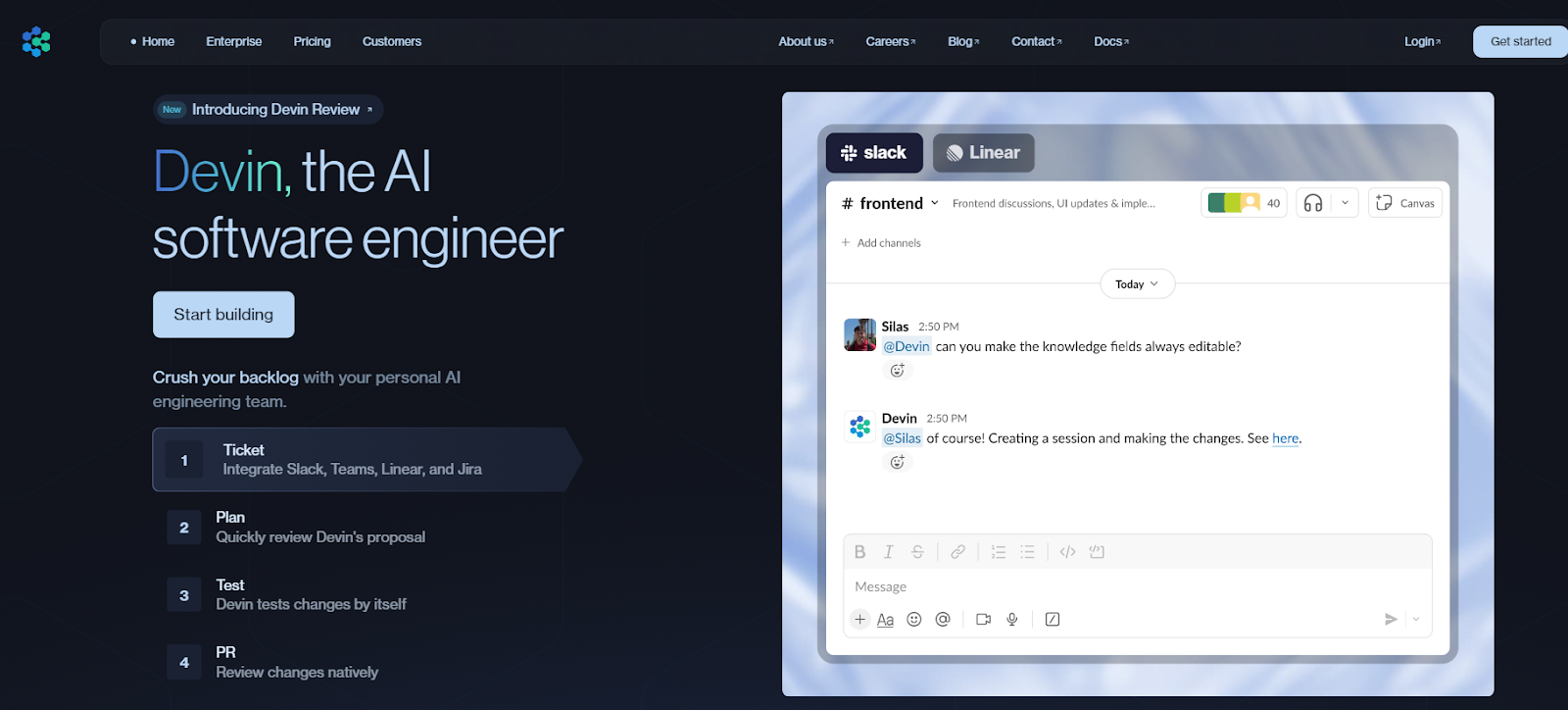

12. Devin

Devin is an autonomous AI software agent built to complete engineering tasks end-to-end.

Instead of working inside your editor, it runs in its own environment with access to a repository, terminal, tests, and browser. You assign a task. Devin plans steps, edits code, runs commands, and iterates until it reaches a result for review.

Best for

- Well-defined engineering tasks with clear success criteria

- Bug fixes, small refactors, and test additions

- Teams willing to supervise an autonomous agent

Where Devin Fits in the Delivery Lifecycle

- Task Execution Layer: takes ownership of scoped engineering tasks

- Autonomous Iteration: edits code, runs tests, and fixes failures

- Human Review Handoff: provides diffs and results for inspection

Devin operates before the pull request review. Validation and approval still happen through normal workflows.

Not for

- Open-ended feature development

- Architectural design decisions

- Teams without strong review and testing practices

Devin performs best when the task is clearly defined.

What Devin Does

Devin reads the repository, creates a plan, and executes it.

- Opens and modifies files

- Runs tests and build commands

- Iterates when failures occur

- Explains its reasoning and actions

- Hands back code changes for review

It behaves more like a junior engineer completing assigned work than an inline assistant.

Hands-On: Fixing a Failing Test

In a repository with a failing unit test, I assigned Devin the task:

Fix the failing test in user_service and ensure all tests pass.

Devin explored the module, identified the broken logic, and updated the implementation. It ran the test suite, detected additional failures, and adjusted the code until all tests passed.

It then presented:

- The modified files

- The diff of changes

- The final test output

The changes were not merged automatically. I reviewed the diff and validated behavior before committing. The workflow felt closer to delegating a contained task than to collaborating line by line.

Pricing

- Basic: Pay-as-you-go (~$20/month base), usage billed in Agent Compute Units (ACUs)

- Team: ~$500/month (includes bundled ACUs, unlimited sessions, team features)

- Enterprise: Custom pricing (VPC deployment, SSO, admin controls, dedicated support)

Pricing depends on compute usage and deployment model.

13. Replit

Replit is a browser-based development platform that combines an online IDE, runtime, collaboration tools, and deployment in one place. You can start coding without installing anything locally. It is built for fast prototyping and quick app deployment.

Best for

- Quick prototypes, demos, and internal tools

- Solo developers or small teams

- Learning projects and experiment

Where Replit Fits in the Delivery Lifecycle

- Browser IDE: write code directly in the cloud

- Built-in AI: scaffold apps, generate code, and debug

- Live Preview: instant run and inspect cycle

- Integrated Hosting: publish apps directly from the platform

Replit spans authoring, testing, and lightweight deployment in a single environment.

Not for

- Large production systems with complex infrastructure

- Strict regulatory or self-hosted requirements

- Deep CI/CD and infrastructure customization

What Replit Does

Replit reduces setup and deployment friction.

- Provisions environments instantly

- Generates project scaffolding with AI

- Provides live preview with logs

- Supports real-time collaboration

- Allows simple app publishing

It prioritizes speed and convenience over deep infrastructure control.

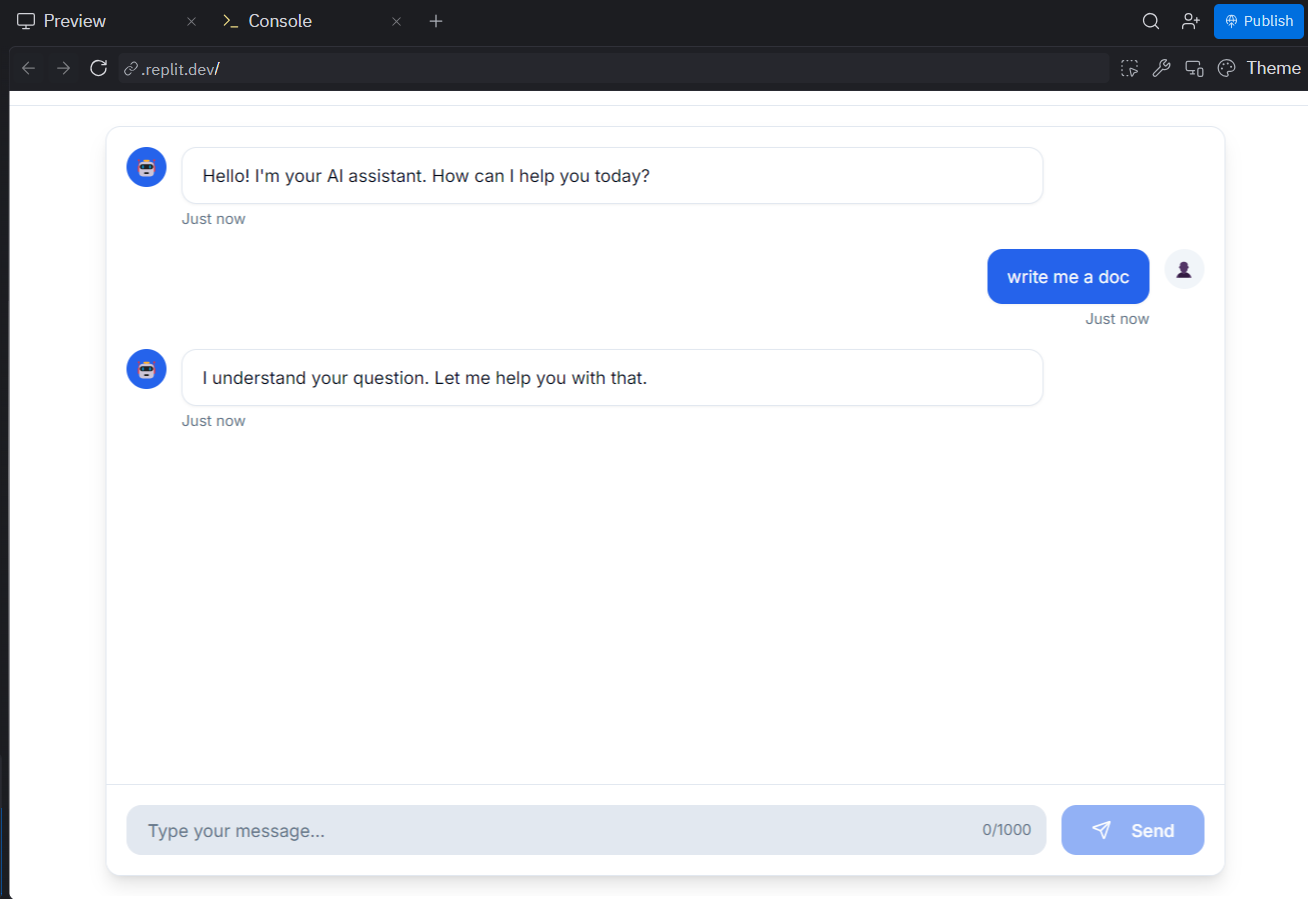

Hands-On: Building a React + Tailwind Chat UI

Inside Replit, I prompted the AI:

Create a simple React + Tailwind UI for an AI assistant with a centered chat window, scrollable messages, a text input box with a Send button, and a theme toggle.

Replit generated a React project with separate components for ChatWindow, Message, and InputBar. It added Tailwind styling, a dark/light theme toggle, and mocked assistant responses. As shown in the snapshot below:

The preview panel updated instantly in the browser. Sending a message triggered a delayed mock response like:

Hello! I'm your AI assistant. How can I help you today?

The file structure was clean and modular. The app ran without additional setup, and I could publish it directly from the same interface. For a prototype, it was ready in minutes.

Pricing

- Starter: Free (limited AI credits, publish one app)

- Basic: $20/month (AI credits, hosting, autonomous builds)

- Teams: $35/user/month (centralized billing, RBAC, private deployments)

Pricing varies based on usage and compute credits.

14. Bolt

Bolt (Bolt.new) is a browser-based AI app builder. You describe the application in natural language, and Bolt scaffolds a working project with a live preview. It is build to reduce setup time and get from idea to running app quickly.

Best for

- Fast prototypes and early MVPs

- Solo builders validating ideas

- Projects where setup time is overhead

Where Bolt Fits in the Delivery Lifecycle

- Prompt-to-App Scaffolding: generates a full project from a description

- Browser-Based Editing: modify code directly in the platform

- Live Preview & Logs: immediate feedback while iterating

- Built-in Publishing: deploy from the same interface

Bolt spans authoring and lightweight deployment in one flow.

Not for

- Long-lived production systems with complex CI/CD

- Strict compliance or self-hosted environments

- Teams needing deep infrastructure customization

What Bolt Does

Bolt generates and wires applications inside its managed environment.

- Scaffolds the frontend and backend structure

- Applies changes from natural-language prompts

- Maintains a live preview while editing

- Supports GitHub import and export

- Reduces early-stage configuration work

It prioritizes speed and convenience over infrastructure flexibility.

Pricing

- Free: Limited monthly token allowance

- Pro: ~$20–$25/month (higher token limits, larger projects)

- Teams: ~$30/user/month (shared usage, centralized billing)

- Enterprise: Custom pricing (advanced security, higher limits, support)

Pricing depends on token usage and plan tier.

15. Lovable

Lovable is a browser-based, prompt-driven app builder that generates full-stack applications.

You describe the product in natural language, and it creates frontend screens, backend logic, routing, and a connected data layer. It is built for getting a working application on screen quickly.

Best for

- MVPs and proof-of-concept apps

- Landing pages and early product validation

- Solo builders or small teams moving fast

Where Lovable Fits in the Delivery Lifecycle

- Prompt-to-App Generation: creates a full app structure from a description

- Conversational Iteration: modify screens and logic through follow-up prompts

- Live Preview: validate UI and flows instantly

- Built-in Deployment: publish from the same platform

Lovable is optimized for early-stage development and early quick product validation.

Not for

- Long-lived production systems

- Custom CI/CD and infrastructure control

- Strict regulatory or air-gapped environments

What Lovable Does

Lovable generates a structured, working application.

- Creates frontend components and routing

- Connects backend logic and data models

- Integrates services like authentication and databases

- Maintains project-level context while iterating

- Allows code export

It abstracts setup and configuration to prioritize speed.

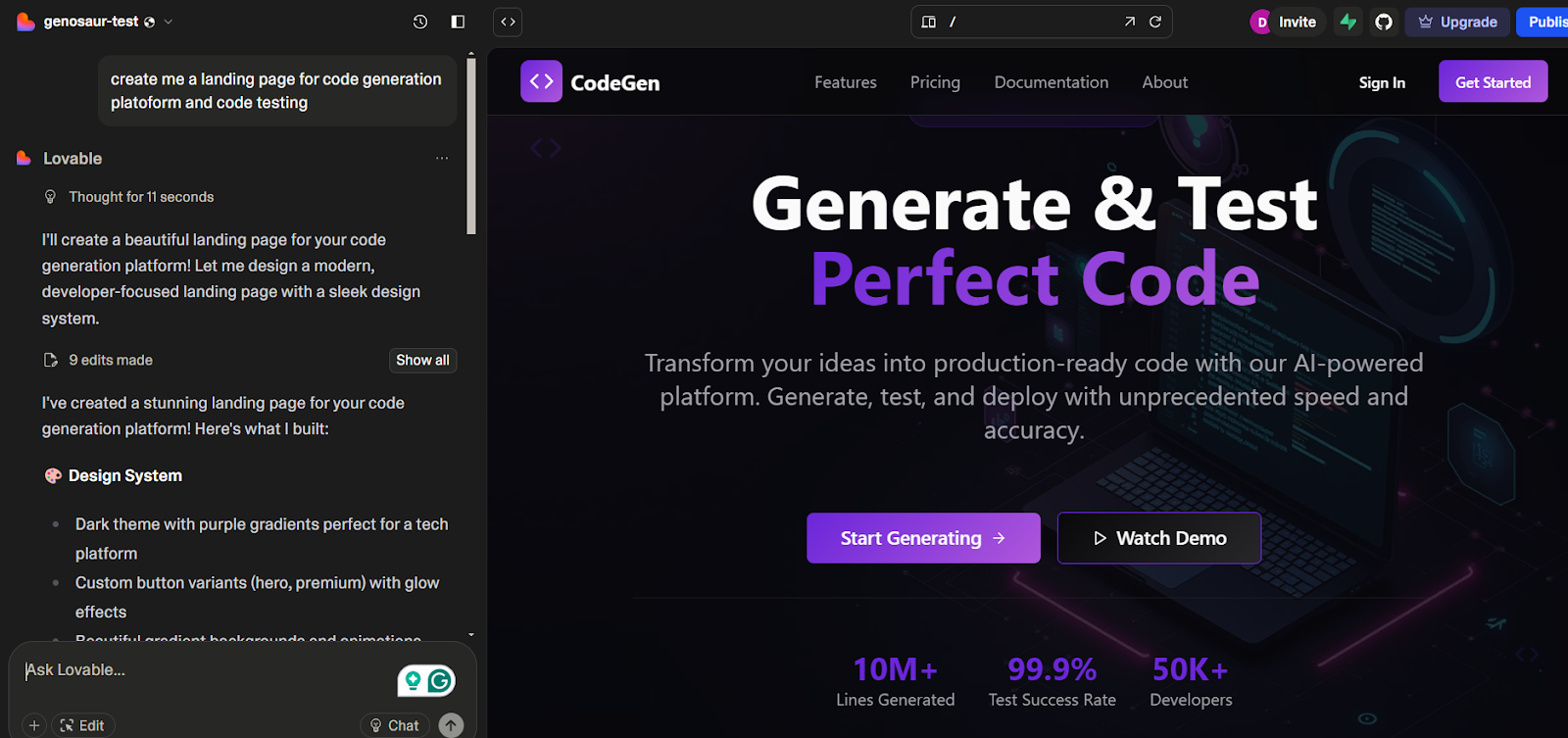

Hands-On: Generating a Developer-Focused Landing Page

I prompted Lovable:

Create a landing page for a platform focused on AI code generation and testing.

Lovable generated a full React-based layout with a dark theme, bold typography, and modular components such as CTA.tsx. It scaffolded sections for headline, metrics, and call-to-action buttons. As visible in the snapshot below:

The preview rendered instantly in the browser. The layout looked polished and structured, with reusable components and consistent spacing.

The structure followed common landing page patterns. Customizing it for deeper product flows or API integrations required manual edits.

For a presentable front-end starting point, it delivered in minutes.

Pricing

- Free: Limited daily credits, public projects

- Pro: ~$25/month (private projects, custom domains, higher credit limits)

- Business: ~$50/month (SSO, role-based permissions, expanded usage)

- Enterprise: Custom pricing (dedicated support, advanced controls, design systems)

Pricing depends on credit usage and plan tier.

How These Tools Actually Compare

Before looking at the table, here’s what I’m testing them on, not feature lists, but real workflow impact:

- Code Quality & Accuracy: Does it generate code you can realistically ship, or do you spend time fixing what it generated?

- Context Awareness: Does it understand your project structure and related files, or just the snippet in front of it?

- Security & Compliance: Does it help avoid insecure or non-compliant code from reaching production?

- IDE & Workflow Integration: Does it fit into your existing editor, PR flow, and CI/CD without forcing a process change?

Now, here’s how the tools stack up when viewed against the pointers listed above.

AI Code Assistants: Comparison (2026)

| Tool | Code Quality & Accuracy | Context Awareness | Security & Compliance | IDE & Workflow Integration |

| Qodo | High, Validates PR changes and enforces standards | High, multi-repo, and PR-level context | High, merge, gating, compliance rules, enterprise controls | High, Git providers, CLI, CI/CD |

| Snyk Code | High for security flaws | Medium, repo-level data flow analysis | High, SAST, and vulnerability scanning | High, IDE plugins and CI integration |

| GitHub Copilot | Medium, strong for scaffolding and boilerplate | Low, mostly file-level | Low, no built-in enforcement | High, seamless IDE integration |

| Cursor | Medium, strong for multi-file refactors | High, project-level awareness | Low, no governance layer | AI-native editor |

| Windsurf | Medium, solid structural edits | High, project-scoped AI edits | Low, limited enforcement | AI-native editor |

| JetBrains AI | Medium, benefits from IDE indexing | Medium, project-aware | Medium, enterprise privacy controls | High, native IDE experience |

| Tabnine | Medium, predictable completions | Low, local context | High, strong privacy and on-prem options | High, broad IDE support |

| Amazon Q Developer | Medium, strong AWS usage patterns | Medium, AWS-aware context | Medium, IAM-backed controls | Medium, IDE + AWS tooling |

| Gemini Code Assist | Medium, strong cloud patterns | Medium, GCP-aware context | Medium and enterprise tiers are available | High, strong IDE coverage |

| Claude Code | Medium–High when supervised | High, repo-aware agent reasoning | Medium, depends on guardrails | Medium, CLI-driven |

| Aider | Medium, clean Git-based diffs | Medium, repo-scoped | Medium, local/self-hosted flexibility | Medium. Git-native CLI |

| Devin | Variable, strong on bounded tasks | High, task-level repo exploration | Low–Medium, requires strong guardrails | Low, separate execution environment |

| Replit | Medium, strong for prototypes | Medium, workspace-scoped | Low, managed cloud runtime | High, browser IDE + deploy |

| Bolt | Low–Medium, fast MVP scaffolding | Low, platform-scoped | Low, limited infra control | Medium, browser workflow |

| Lovable | Low–Medium, strong for demos | Medium, project-level state | Low, managed platform | Medium, browser workflow |

What This Comparison Really Shows

If you look at the table closely, a pattern becomes obvious. Most AI tools are built to help you write code faster. They reduce friction while typing, help scaffold features, or assist with refactors. AI-first editors improve how you navigate and modify existing code. Agent tools can coordinate larger, multi-file changes. App builders help you go from idea to a working demo quickly. But very few tools are designed around a different question: Is this change safe to merge?

Conclusion: How to Structure Your AI Stack

After testing these tools in real workflows, the biggest takeaway is simple: they don’t compete, they layer. Editor assistants help you move faster while writing code. Agents handle multi-file changes and structured tasks. Security tools flag exploitable issues. An AI code review platform like Qodo validates pull requests before merging. The teams achieving consistent results in 2026 aren’t trying to replace their workflows with AI; they’re defining where each tool fits within them. Once those boundaries are clear, velocity increases without compromising code quality.

FAQs

1. What are the best AI code assistants in 2026?

The best AI code assistants in 2026 depend on what problem you’re solving. GitHub Copilot, JetBrains AI, Tabnine, Gemini Code Assist, and Amazon Q help generate code inside the editor. Cursor, Claude Code, Aider, and Devin assist with multi-file refactors and repository-level tasks. For pull-request validation and merge readiness, AI code review platforms like Qodo focus on analyzing diffs, enforcing standards, and minimizing code review risk before code reaches production.

2. How do AI code review platforms differ from editor-based assistants?

Editor-based assistants generate code while you write it. AI code review platforms operate at the pull request stage. Instead of producing code, they analyze what changed, check for security risks, missing tests, and policy violations, and determine whether a PR is ready to merge. Platforms like Qodo are designed specifically for this pre-merge enforcement layer rather than inline code generation.

3. What is context-aware AI code review?

Context-aware AI code review evaluates changes using repository-wide and, in some cases, multi-repository context. This includes understanding shared modules, test impact, architectural patterns, and organizational rules. Without that context, tools tend to operate at the file level and miss broader risks. Platforms like Qodo use an indexed repository context to improve signal quality in pull-request reviews.

4. How do AI tools enforce coding standards and compliance in PRs?

AI code review platforms enforce standards by scanning pull request diffs against configurable rules. These rules can include security checks, required test coverage, validation of ticket linkage, naming conventions, and organization-specific policies. In systems like Qodo, blocking rules can avoid merges until critical issues are resolved, effectively acting as an automated quality gate inside CI/CD workflows.

5. Can AI code assistants replace manual code review?

AI tools can automate large parts of code review, including security checks, test coverage validation, and standards enforcement. With an AI code review platform like Qodo, merge decisions can be backed by consistent, context-aware analysis of pull request diffs before approval. Human reviewers still handle architectural intent and business logic, but objective validation can be automated at scale.

6. Which AI tools work best for large, multi-repo teams?

Large teams working across multiple services benefit from tools that understand broader repository context and enforce standards consistently. Editor assistants help with local productivity, but they don’t provide organizational governance. AI code review platforms such as Qodo are built to scale across multiple repositories, applying consistent review logic and compliance checks before merge.